Chasing Performance in a System That Didn't Want to Be Fast

Every engineering team has a week that recalibrates their understanding of their own system. Ours was the first week of March.

We spent three weeks after that trying to make our platform faster. We shipped 20 pull requests. We eliminated 59,000 errors per week. We added tracing to every dark corner of our request pipeline. And at the end of it, the number our users would actually feel — tail latency on the pages they use all day — was exactly the same.

This is that story. Not because it ended in triumph, but because the failure mode is instructive and we think more teams should write about work that didn't land the way they expected.

It Happened on a Tuesday

Our platform handles equipment procurement for large fleet operators. The core workflow is quote-request-order: a buyer describes what they need, vendors respond with quotes, the buyer picks one and places an order. Most of our traffic is people cycling through list views, detail pages, and submission forms hundreds of times a day. When those pages are slow, the whole product is slow. There's nowhere to hide.

On a Tuesday morning in early March, median response times tripled and tail latency hit 11 seconds. It wasn't a deploy. It wasn't a traffic spike. It was a metastable failure.

A week earlier, an email webhook integration had broken during a DNS change that nobody noticed. Five days of events piled up in the provider's retry queue. When the webhook was quietly restored, the provider flushed the backlog — thousands of events replayed in rapid succession, each one triggering a synchronous HTTP call wrapped in a database transaction with a 240-second timeout.

That was the trigger. The feedback loop was worse. As long-running transactions consumed database connections, legitimate queries started queuing. Queued queries timed out. Timeouts triggered client retries. Retries added more load to an already-saturated pool. The system stayed degraded for hours after the webhook replay finished, because the sustaining mechanism — retry pressure on an exhausted connection pool — was self-reinforcing. That's the signature of a metastable failure: a temporary trigger, a stable-looking system that was actually running near its limits, and a feedback loop that holds the failure state in place.

It didn't help that our hosting provider had changed their network routing around the same time, adding a few milliseconds to every database round-trip. Or that several frequently-queried tables hadn't had their Postgres statistics refreshed in over a year, leaving the query planner to occasionally pick catastrophic execution plans on small tables that should have been trivial to scan.

There was no single root cause. The system was in what the metastability literature calls the "vulnerable state" — functioning normally, but with no margin. Any of these factors alone would have been absorbed. Together, they compounded.

Going Hunting

Once the acute incident resolved, we had a choice: patch the webhook handler and move on, or treat this as the opening to a broader investigation into why our system was running so close to its limits in the first place. We chose the latter and timeboxed it to two weeks.

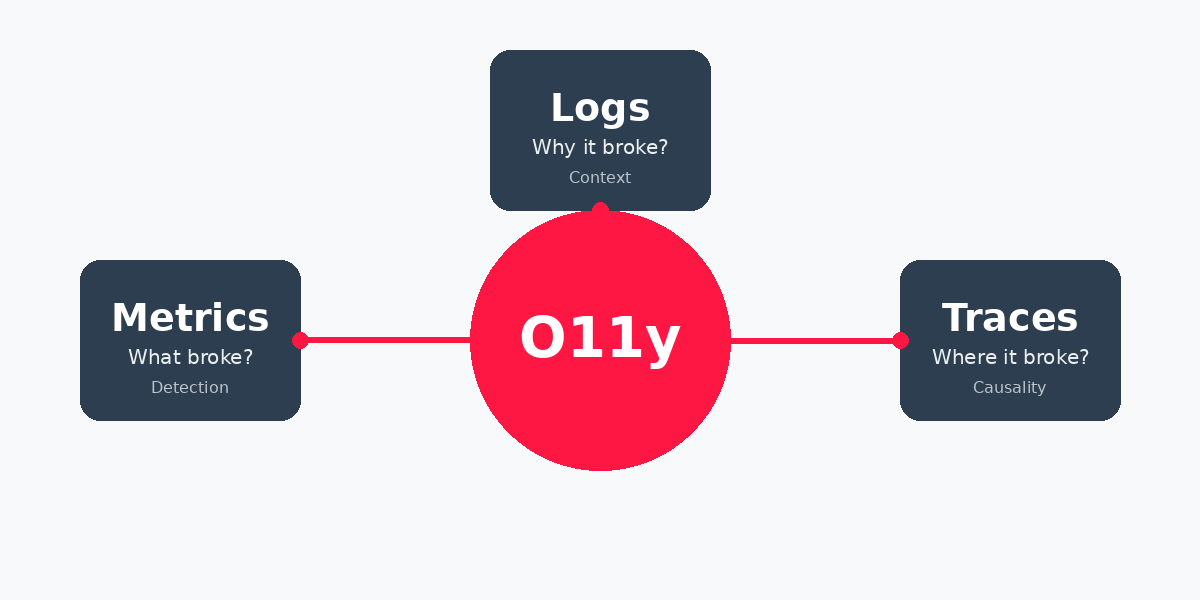

We started by pulling a full week of production traces and scoring every user-facing operation by a composite of three factors: how many users hit it per day, how bad the tail latency was, and how often it errored. The result was a ranked list of pain, and it looked about how you'd expect — the pages people use most were also the pages that hurt most. List views. Order management. Quoting workflows. Messaging.

But the ranked list wasn't the important finding. This was: **97% of our latency was invisible.**

Our slowest traced request took 22 seconds. It contained 6 instrumented spans totaling 500 milliseconds. The other 21.5 seconds were spent inside application code that had no child spans — resolver functions, authorization checks, batch loaders, business logic. We had full distributed tracing set up. We had dashboards. We had alerts. And we were blind to where virtually all of the time went.

You can't fix what you can't see. So that became priority one.

What We Actually Shipped

Over three weeks we merged about 20 pull requests and closed 30 issues. They fell into five categories, and the returns were uneven.

Making the System Legible

We added tracing spans across our five slowest resolver chains and all of our batch-loading functions. We enriched error traces with the metadata that had been missing — the actual error message, which user triggered it, which resolver produced it. We shipped database metrics to our observability platform so we could look at query performance historically instead of scrambling to investigate after something broke.

None of this made the app faster. All of it made the app legible. Before this work, debugging a slow page load meant staring at a 22-second trace with 500 milliseconds of explained time. After, we could see exactly which batch loader was taking 7 seconds, which table scan was reading millions of rows, which resolver was issuing 130 individual database queries when it should have issued one.

This was the most valuable work of the entire sprint. Not the most dramatic, not the most satisfying — but everything that follows depends on the system being readable. If we'd skipped this and gone straight to query optimization, we'd have been guessing. We know, because that's what we'd been doing before.

Shutting Up the False Alarms

Five high-traffic operations had error rates between 7% and 35%. Inventory search failed a third of the time. The messaging inbox failed nearly a quarter of the time. The application shell — the query that runs on every page navigation — failed 7% of the time.

Most of these weren't real failures. They were the application treating expected conditions as errors. An organization without a particular integration configured would throw an error instead of returning an empty result. An expired authentication token would cause a GraphQL error instead of redirecting to login. The user would see a blank screen or a spinner that never resolved, and would usually just refresh — which worked, because the "error" was transient.

One pull request fixed all five. We returned empty results for unconfigured integrations and redirected to login on auth failures instead of throwing. Roughly 59,500 weekly errors disappeared overnight.

This matters beyond the obvious. Every false error generates a retry. Every retry adds load. Every bit of added load pushes real operations closer to their timeouts. In a system that was already running near its connection pool limits, tens of thousands of phantom retries per week were meaningful background pressure. Removing them didn't make pages faster, but it made the system's behavior more predictable and gave real queries more room to breathe.

Fixing Queries (The Part Everyone Expects)

This is the work that looks best in a pull request description. We fixed N+1 query patterns across several list pages and detail views. We added missing database indexes — one table handling thousands of daily queries had no index on its most-filtered column. We eliminated a sequential scan that was reading 92 million tuples on a small table that didn't need to be scanned at all. We removed a legacy intermediate step from our quoting pipeline that was creating a throwaway record through a dozen individual inserts, only to read it back and transform it into the final record. Removing it saved about 3 seconds per quote submission.

Individual queries got measurably faster. Some dramatically so.

Overall tail latency didn't change.

The p99 across all user-facing operations stayed between 4 and 5 seconds throughout the sprint, with no visible trend break after any deployment. We checked daily, not just week-over-week aggregates — an important lesson we learned midway through, when a promising-looking improvement turned out to be an artifact of comparing a 7-day window (which captured transient spikes) against a 2-day window (which hadn't had time to capture any).

The reason the aggregate didn't move is simple math. The operations we optimized were real improvements, but they weren't large enough fractions of total traffic to register. A single high-frequency query that fires on every page navigation — one we barely touched — accounted for nearly 300,000 calls per week at a 3.5-second p99. It anchored the overall number. Improving a query that runs 5,000 times a week doesn't register in the presence of that.

Going Async (The Part That Didn't Ship)

Our slowest user-facing operation was quote submission. Median: 11 seconds. Tail: 44 seconds. Users would click submit and stare at a spinner for the better part of a minute, with no idea whether their work was saved. If the request timed out, they had to fill out the form again.

We built a capture-then-process pipeline. Accept the user's input immediately, return a submission ID, process the quote asynchronously in the background, and push status updates back through a real-time subscription. The pattern is well-established and the idea was sound.

It worked in staging. The pipeline covered five user-facing quoting operations across three different applications. But one of the five had a regression — a required field that wasn't being passed through to the async worker — and the scope had grown larger than we intended. Five operations across three apps is a lot of critical paths to change in a single deployment. A bug in any one of them means a user can't submit a quote, and if a user can't submit a quote, they can't do business. That's not a "we'll fix it in the next release" situation.

We pulled it back before it reached production. The backend infrastructure is solid and still in the codebase, ready for a more incremental rollout — one operation at a time, behind a feature flag, with a kill switch. We'll get there. But not by being in a hurry.

Infrastructure Housekeeping

We archived 93 gigabytes of stale request logging data that had been quietly growing in our production database. We scaled from four production nodes to three after confirming the fourth was adding cost without handling meaningful traffic. We manually ran ANALYZE on tables where Postgres auto-analyze had stopped triggering because the tables were below the default row-change threshold — despite being queried hundreds of thousands of times per day with stale statistics.

None of this is interesting. All of it matters.

The Uncomfortable Part

After three weeks of focused performance work, the overall user-facing tail latency is the same. The p99 on deployment days was 4.8 seconds. The p99 the week before was 4.5 to 5.2 seconds. Within noise.

The app does not feel faster to our users. The pages they load hundreds of times a day still take multiple seconds. The quoting flow still takes 10 to 15 seconds on a good submission.

This is the part of performance work that doesn't make it into most engineering blogs. You can ship real improvements to real queries, eliminate real errors, add real instrumentation — and still not move the number a user would notice. Because the thing anchoring that number isn't the thing you fixed, and you didn't know that until you could see it, and you couldn't see it until you'd done the instrumentation work, which you couldn't justify until something broke.

There's a circularity to this kind of work that's uncomfortable to admit. The investigation required observability. The observability required investment. The investment required an incident. The incident was caused by the fragility that the observability would have caught earlier. Each piece makes sense in sequence, but the sequence only looks rational in hindsight.

What We Actually Gained

The platform didn't get faster. But it got meaningfully better in ways that are harder to graph.

**It's observable.** We know exactly which batch loader, which resolver, which table scan is responsible for every slow page load. Before this sprint, we were guessing. Now we have spans, and they point at specific functions in specific files.

**It's stable.** 59,000 fewer errors per week. Fewer silent failures cascading into retry storms. Fewer users refreshing a page because it looked broken when it was actually just lying about its own health.

**It's less fragile.** Database statistics are current. Missing indexes are in place. The specific combination of factors that caused the March incident — stale statistics, missing indexes, connection-holding webhook transactions — can't recur in the same way.

**It's less likely to have another bad Tuesday.** That's not as satisfying as "pages load in 500 milliseconds now." But a system that doesn't collapse under pressure is a system you can build on. A system that collapses unpredictably is one you're afraid to touch.

What We Left on the Table

Six issues are shaped and waiting. Timeout resilience on payment-path operations, so users aren't double-charged when a request hangs. Form state persistence, so users don't lose 10 minutes of work when a submission fails. Idempotency keys, so a retry can't create a duplicate record.

The async quoting pipeline needs a more careful rollout — one operation at a time, validated in production before moving to the next.

And the high-frequency query that anchors our p99? We know what it does now. We have the spans. When we decide to invest in making the app actually feel faster, that's where we'll start.

We chose to stop here. Performance tuning is a seductive time sink, especially for an early-stage company. There's always another query to optimize, another span to add, another p99 to shave. The trap is spending months chasing numbers while the product itself isn't changing.

We made the system legible and stable. We know where the time goes. We have the instrumentation to validate future improvements in hours instead of weeks. And we have a backlog of shaped work for when the investment makes sense.

We didn't make it fast. We made it honest. Honest systems are the ones you can actually fix — when it matters.